In 2020, at the end of the previous group of editors’ terms I did a quick survey of one year’s output in the American Sociological Review to see how the journal was doing on basic standards of research transparency and replicability. The results were dismal, with 4 out of 15 quantitative data analysis papers providing data and code sufficient to replicate the papers (at a glance; I didn’t test their code), and none of the mixed-method or qualitative papers providing research materials such as analysis code, interview guides, survey instruments, or transcripts.

I just repeated my tiny, poor quality study using volume 88 of the journal (2023), now with a different editorial team (Arthur Alderson and Dina Okamoto — who have finished their term). The results are about the same. Out of 36 total papers, I see 8 replication packages for 25 quantitative data analysis articles. Six papers provide less, like partial data availability, or some variable codes. One says code “upon request,” and one says there is a replication package on the author’s personal website, but it’s not there. 11 of the 25 quantitative papers provide nothing. One mixed-methods paper provides a replication package and three provide nothing. One of the 6 qualitative papers provides interview guides and protocols, and the rest provide nothing. The full results are at the end of this post.*

I used to work like that, in the early days of the Internet. We published tables of results, guaranteed truthful by the honor system. (God knows how many people made up their results.) I honestly can’t imagine going back to it now. I would be simply too embarrassed to publish, a statistical analysis without providing the code and at least a link to the data. That’s the way norms are, I guess — they get into your head. And I guess the discipline of sociology is in a space were with very different norms coexisting side by side. Seems untenable. (Related: The open access journal Sociological Science has recently implemented a replication package requirement for “articles relying on statistical or computational methods” [they also publish qualitative and theoretical work].)

Also, it’s quite bad that all the papers that don’t provide basic research materials with their work also don’t — not a single case I could find — even explain why they don’t. It’s basic social science (outside of American sociology) that if you can’t provide data, code, and other research materials, you offer an explanation of why that is. Not even having an explanation here is a bad look for ASR and the American Sociological Association. This is the flagship journal of the association. People get tenure for these papers. If the journal required it, people would do it, and we would all benefit.

I make this case in much more depth in my forthcoming book, Citizen Scholar, including in one chapter on open science, which I have posted in draft form. More briefly, here is what I wrote about this in 2020, sadly still relevant today:

Why does that matter?

First, providing things like interview guides, coding schemes, or statistical code, is helpful to the next researcher who comes along. It makes the article more useful in the cumulative research enterprise. Second, it helps readers identify possible errors or alternative ways of doing the analysis, which would be useful both to the original authors and to subsequent researchers who want to take up the baton or do similar work. Third, research materials can help people determine if maybe, just maybe, and very rarely, the author is actually just bullshitting. I mean literally, what do we have besides your word as a researcher that anything you’re saying is true? Fourth, the existence of such materials, and the authors’ willingness to provide them, signals to all readers a higher level of accountability, a willingness to be questioned — as well as a commitment to the collective effort of the research community as a whole. And, because it’s such an important journal, that signal might boost the reputation for reliability and trustworthiness of the field overall.

There are vast resources, and voluminous debates, about what should be shared in the research process, by whom, for whom, and when — and I’m not going to litigate it all here. But there is a growing recognition in (almost) all quarters that simply providing the “final” text of a “publication” is no longer the state of the art in scholarly communication, outside of some very literary genres of scholarship. Sociology is really very far behind other social science disciplines on this. And, partly because of our disciplinary proximity to the scholars who raise objections like those I mentioned above, even those of us who do the kind of work where openness is most normative (like the papers below that included replication packages), can’t move forward with disciplinary policies to improve the situation. ASR is paradigmatic: several communities share this flagship journal, the policies of which are serving some more than others.

What policies should ASA and its journals adopt to be less behind? Here are a few: Adopt TOP badges, like the American Psychological Association has; have their journals actually check the replication code to see that it produces the claimed results, like the American Economic Association does; publish registered reports (peer review before results known), like all experimental sciences are doing; post peer review reports, like Nature journals, PLOS, and many others do. Just a few ideas.

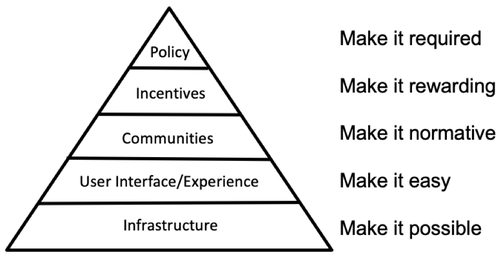

Change is hard. Even if we could agree on the direction of change. Brian Nosek, director of the Center for Open Science (COS), likes to share this pyramid, which illustrates their “strategy for culture and behavior change” toward transparency and reproducibility. The technology has improved so that the lowest two levels of the pyramid are pretty well taken care of. For example, you can easily put research materials on COS’s Open Science Framework (with versioning, linking to various cloud services, and collaboration tools), post your preprint on SocArXiv (which I direct), and share them with the world in a few moments, for free. Other services are similar. The next levels are harder, and that’s where we in sociology are currently stuck.

For some how-to reading, consider, Transparent and Reproducible Social Science Research: How to Do Open Science, by Garret Christensen, Jeremy Freese, and Edward Miguel (or this Annual Review piece on replication specifically). For an introduction to Scholarly Communication in Sociology, try my report with that title. Please feel free to post other suggestions in the comments.

2023 results details: Volume 88

Please note: no one is paying me or delegating this task to me, I didn’t give this much time, and I could have made mistakes, I will gladly correct anything if I’m wrong. ASA should be doing this reporting, but they don’t want to. When I was on the Publications Committee several of us spent several years trying to get ASA to adopt a policy to simply notify readers if they were providing these materials (detailed here). Two subcommittees later we finally had a policy passed, and the elected Council of the association shitcanned it without so much as a public comment.

| Authors | Article Title | Type of analysis | Data source | Full package | Nothing | Other |

| Best, Rachel Kahn; Arseniev-Koehler, Alina | The Stigma of Diseases: Unequal Burden, Uneven Decline | Quant | News articles | Github | ||

| Biegert, Thomas; Kuhhirt, Michael; Van Lancker, Wim | They Can’t All Be Stars: The Matthew Effect, Cumulative Status Bias, and Status Persistence in NBA All-Star Elections | Quant | Basketball stats | OSF | ||

| Lersch, Philipp M. | Change in Personal Culture over the Life Course | Quant | Cross National Equivalent File | OSF | ||

| Engzell, Per; Mood, Carina | Understanding Patterns and Trends in Income Mobility through Multiverse Analysis | Quant | Swedish registry | OSF | ||

| Cha, Y., Weeden, K. A., & Schnabel, L | Is the Gender Wage Gap Really a Family Wage Gap in Disguise? | Quant | SIPP | OSF | ||

| Huang, P., & Butts, C. T. | Rooted America: Immobility and Segregation of the Intercounty Migration Network | Quant | ACS | Dataverse | ||

| Haupt, A. | Who Profits from Occupational Licensing? | Quant | CPS/BIBB | OSF | ||

| Nelson, Laura K.; Brewer, Alexandra; Mueller, Anna S.; O’Connor, Daniel M.; Dayal, Arjun; Arora, Vineet M. | Taking the Time: The Implications of Workplace Assessment for Organizational Gender Inequality | Quant | Digital trace data + text | Nothing | ||

| Schneiberg, Marc; Goldstein, Adam; Kraatz, Matthew S. | Embracing Market Liberalism? Community Structure, Embeddedness, and Mutual Savings and Loan Conversions to Stock Corporations | Quant | Admin data / Census | Nothing | ||

| Leahey, Erin; Lee, Jina; Funk, Russell J. | What Types of Novelty Are Most Disruptive? | Quant | Web of Science & text corpus | Nothing | ||

| Cheng, Mengjie; Smith, Daniel Scott; Ren, Xiang; Cao, Hancheng; Smith, Sanne; McFarland, Daniel A. | How New Ideas Diffuse in Science | Quant | Web of Science | Nothing | ||

| Cole, Wade M.; Schofer, Evan; Velasco, Kristopher | Individual Empowerment, Institutional Confidence, and Vaccination Rates in Cross-National Perspective, 1995 to 2018 | Quant | Secondary cross national | Nothing | ||

| Donahue, ST | The Politics of Police | Quant | Linked admin data | Nothing | ||

| VanHeuvelen, Tom | The Right to Work and American Inequality | Quant | PSID | Nothing | ||

| Parolin, Zachary; Desmond, Matthew; Wimer, Christopher | Inequality Below the Poverty Line since 1967: The Role of the US Welfare State | Quant | CPS | Nothing | ||

| Henaut, Leonie; Lena, Jennifer C.; Accominotti, Fabien | Polyoccupationalism: Expertise Stretch and Status Stretch in the Postindustrial Era | Quant | Art graduate survey | Nothing | ||

| Zhou, Xiang; Pan, Guanghui | Higher Education and the Black-White Earnings Gap | Quant | NLSY, IPEDS | Nothing | ||

| Yu, W., & Yan, H. X. | Effects of Siblings on Cognitive and Sociobehavioral Development: Ongoing Debates and New Theoretical Insights | Quant | NLSY | Nothing | ||

| Caron, L., McAvay, H., & Safi, M. | Born Again French: Explaining Inconsistency in Citizenship Declarations in French Longitudinal Data | Quant | French Census | Nothing | ||

| Zhang, Simone; Johnson, Rebecca A. | Hierarchies in the Decentralized Welfare State: Prioritization in the Housing Choice Voucher Program | Quant | Policies | Dataverse | ||

| Zhang, Letian | Racial Inequality in Work Environments | Quant | Proprietary data / own survey / EEO-1 / GSS | Says package available on personal website but it’s not there | ||

| Karell, Daniel; Linke, Andrew; Holland, Edward; Hendrickson, Edward | Born for a Storm: Hard-Right Social Media and Civil Unrest | Quant | Social media / crowdsourced / Census | Video transcripts shared | ||

| Rivera, Lauren A. A.; Tilcsik, Andras | Not in My Schoolyard: Disability Discrimination in Educational Access | Quant | Audit study | Pretest survey shared | ||

| Jorgenson, Andrew K.; Clark, Brett; Thombs, Ryan P.; Kentor, Jeffrey; Givens, Jennifer E.; Huang, Xiaorui; El Tinay, Hassan; Auerbach, Daniel; Mahutga, Matthew C. | Guns versus Climate: How Militarization Amplifies the Effect of Economic Growth on Carbon Emissions | Quant | Secondary cross national | Code available upon request | ||

| Chu, James | Clarity from Violence? Intragroup Aggression and the Structure of Status Hierarchies | Quant | Original school network | Two survey items shared | ||

| Swindle, Jeffrey | Pathways of Global Cultural Diffusion: Mass Media and People’s Moral Declarations about Men’s Violence against Women | Mixed | News / DHS | Dropbox | ||

| Hsiao, Yuan; Leverso, John; Papachristos, Andrew V. | The Corner, the Crew, and the Digital Street: Multiplex Networks of Gang Online-Offline Conflict Dynamics in the Digital Age | Mixed | Social media / admin data | Nothing | ||

| Watson, J | Standardizing Refuge: Pipelines and Pathways in the U.S. Refugee Resettlement Program | Mixed | Admin data / Interviews | Nothing | ||

| Ranganathan, Aruna; Das, Aayan | Marching to Her Own Beat: Asynchronous Teamwork and Gender Differences in Performance on Creative Projects | Mixed | Ethnography / interviews / field experiment | Nothing | ||

| McCabe, Brian J. | Ready to Rent: Administrative Decisions and Poverty Governance in the Housing Choice Voucher Program | Qual | Interviews | Nothing | ||

| Fink, Pierre-Christian | Caught between Frontstage and Backstage: The Failure of the Federal Reserve to Halt Rule Evasion in the Financial Crisis of 1974 | Qual | Historical / Archival | Nothing | ||

| Sirois, Catherine | Contested by the State: Institutional Offloading in the Case of Crossover Youth | Qual | Ethnography / interviews | Nothing | ||

| Harvey, Peter Francis | Everyone Thinks They’re Special: How Schools Teach Children Their Social Station | Qual | Ethnography / interviews | Nothing | ||

| Chiarello, E. | Trojan Horse Technologies: Smuggling Criminal-Legal Logics into Healthcare Practice | Qual | Interviews | Nothing | ||

| Brensinger, Jordan | Identity Theft, Trust Breaches, and the Production of Economic Insecurity | Qual | Interviews | Protocols (Dataverse) | ||

| Menjivar, Cecilia | State Categories, Bureaucracies of Displacement, and Possibilities from the Margins | Theory | No data |

* Results were updated a few hours after the initial post when I discovered I left out issue 6 of volume 88. I sort of regret the error. Results were updated again after Simone Zhang pointed me to their replication package (ASR should really put all these links in the same place with the same label).

Cha, Youngjoo, Kim A. Weeden, and Landon Schnabel, “Is the Gender Wage Gap Really a Family Wage Gap in Disguise?” is in Vol 88 (6). It’s not listed above.

Replication code — “reproduction” code, technically , but that sounds weird to me — is on OSF. Data is (public use) SIPP 2018. The package includes all the code to get from the raw data file to all results, including those in footnotes and the supplemental appendix.

Not that 5 out of 21 is all that much better than 4 out of 20…

LikeLiked by 1 person

Tx! Fixed. It is weird that different people use replication and reproduction in opposite ways.

LikeLike

I’m still disappointed in the American Sociological Review after the episode described here: http://www.stat.columbia.edu/~gelman/research/published/ChanceEthics8.pdf

LikeLiked by 1 person

Thanks for sharing – I don’t remember that story. I have twice submitted “comments”

to ASR pointing out clear errors in published papers and both times, after peer review saying I was right, the editors decided the comment was not important enough to waste pages on (“pages” being some dumb legacy concept old people use). It’s bad!

LikeLike

The scarce element in journal space is not physical pages; it’s academic prestige. My impression when they rejected my letter was that they felt that publication in ASR conveys prestige, and they didn’t want to devalue that coin by minting it too many times. Which is a legitimate concern! It kind of annoyed me, though, first because that meant that they were not correcting an error they had published, and second because, as a non-sociologist, I get close to zero academic prestige from publishing in sociology journals: I do it entirely as a public service!

LikeLiked by 1 person

Part of the editors’ response to me was this: “We must consider not only the merits of the arguments and evidence in the submitted comment, but also whether the comment is important enough to occupy space that could otherwise be used for publishing new research. With these factors in mind, we feel that the main result that would come from publishing the comment would be that valuable space in the journal would be devoted to making a point that Goffman has already acknowledged elsewhere (that she did not employ probability sampling).” This annoyed me because they acknowledged the article was wrong, but decided it wasn’t important enough to notify readers. They don’t have a mechanism for notifying readers that does not “occupy space” in the journal (in 2015, or still today in 2024). https://familyinequality.wordpress.com/2015/08/26/comment-on-goffmans-survey-american-sociological-review-rejection-edition/

LikeLike

In my experience, many university IRBs specifically ban data sharing; even if deidentified. Some even mandate data destruction when the study is complete. If openness is desirable, it should be allowed.

Of course this only applies to data the researchers collected, which is another reason to use secondary sources.

LikeLiked by 1 person

Wow! And here am I trying to find a way to put data from a 30-year panel study in the public domain. Just need a small amount of funding to anonymize the dataset. Data destruction is totally antithetical to the amply demonstrated benefits arising from open evidence … across all disciplines.

LikeLiked by 1 person